Backpropagation: Difference between revisions

No edit summary |

No edit summary |

||

| Line 10: | Line 10: | ||

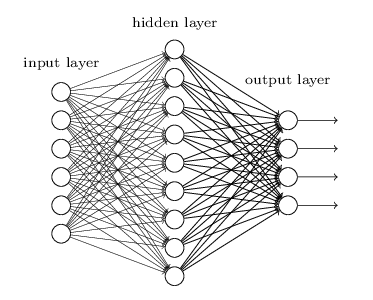

Backpropagation is a method to calculate the gradient of the loss function with respect to the weights in an artificial neural network. It is commonly used as a part of algorithms that optimize the performance of the network by adjusting the weights, for example in the gradient descent algorithm. It is also called backward propagation of errors.<ref>https://en.wikipedia.org/wiki/Backpropagation</ref> | Backpropagation is a method to calculate the gradient of the loss function with respect to the weights in an artificial neural network. It is commonly used as a part of algorithms that optimize the performance of the network by adjusting the weights, for example in the gradient descent algorithm. It is also called backward propagation of errors.<ref>https://en.wikipedia.org/wiki/Backpropagation</ref> | ||

== How does it work or a deeper look == | == How does it work or a deeper look == | ||

| Line 18: | Line 16: | ||

* If you are discussing a THING YOU CAN TOUCH, you must explain how it works, and the parts it is made of. Google around for an "exploded technical diagram" of your thing, [http://cdiok.com/wp-content/uploads/2012/01/MRI-Technology.jpg maybe like this example of an MRI] It is likely you will reference outside links. Please attribute your work. | * If you are discussing a THING YOU CAN TOUCH, you must explain how it works, and the parts it is made of. Google around for an "exploded technical diagram" of your thing, [http://cdiok.com/wp-content/uploads/2012/01/MRI-Technology.jpg maybe like this example of an MRI] It is likely you will reference outside links. Please attribute your work. | ||

* If you are discussing a PROCESS OR ABSTRACT CONCEPT (like [[fuzzy logic]]) you must deeply explain how it works. | * If you are discussing a PROCESS OR ABSTRACT CONCEPT (like [[fuzzy logic]]) you must deeply explain how it works. | ||

[[file:backpropagation.png|right|frame<ref>http://neuralnetworksanddeeplearning.com/chap5.html<ref>]] | |||

== Examples == | == Examples == | ||

Revision as of 09:07, 15 September 2017

This is student work which has not yet been approved as correct by the instructor

Introduction[edit]

Backpropagation is a method to calculate the gradient of the loss function with respect to the weights in an artificial neural network. It is commonly used as a part of algorithms that optimize the performance of the network by adjusting the weights, for example in the gradient descent algorithm. It is also called backward propagation of errors.[2]

How does it work or a deeper look[edit]

- If you are discussing a THING YOU CAN TOUCH, you must explain how it works, and the parts it is made of. Google around for an "exploded technical diagram" of your thing, maybe like this example of an MRI It is likely you will reference outside links. Please attribute your work.

- If you are discussing a PROCESS OR ABSTRACT CONCEPT (like fuzzy logic) you must deeply explain how it works.

Examples[edit]

Please include some example of how your concept is actually used. Your example must include WHERE it is used, and WHAT IS BENEFIT of it being used.

Pictures, diagrams[edit]

Pictures and diagrams go a LONG way to helping someone understand a topic. Especially if your topic is a little abstract or complex. Using a picture or diagram is a two part process:

External links[edit]

- http://neuralnetworksanddeeplearning.com/chap5.html

- http://neuralnetworksanddeeplearning.com/chap6.html

- http://rimstar.org/science_electronics_projects/backpropagation_neural_network_software_3_layer.htm

- to help fellow students

- Please make sure the content is good

- and don't link to a google search results, please