Multi-layer perceptron (MLP): Difference between revisions

| (3 intermediate revisions by the same user not shown) | |||

| Line 17: | Line 17: | ||

== How does it work or a deeper look == | == How does it work or a deeper look == | ||

* | * Multi-layer perceptrons use backpropagation as part of their learning phase. | ||

* | * The nodes use a non-linear activation function (Basically they turn each other on) | ||

* MLP's are fully connected (each hidden node is connected to each input node etc.) | |||

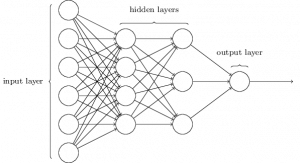

[[File:Mlp-network.png|thumb]] | |||

== External links == | == External links == | ||

Latest revision as of 21:47, 6 April 2018

This is student work which has not yet been approved as correct by the instructor

Case study notes[1]

Introduction[edit]

Multi-layer perceptrons are simply a type of neural network consisting of at least 3 nodes. The input nodes, the hidden nodes, and the output nodes. Each node is a neuron that 'activates' and turns on the next node etc.

<ref> https://en.wikipedia.org/wiki/Multilayer_perceptron</ref>

How does it work or a deeper look[edit]

- Multi-layer perceptrons use backpropagation as part of their learning phase.

- The nodes use a non-linear activation function (Basically they turn each other on)

- MLP's are fully connected (each hidden node is connected to each input node etc.)

External links[edit]

- It would be helpful

- to include many links

- to other internet resources

- to help fellow students

- Please make sure the content is good

- and don't link to a google search results, please